The payoff

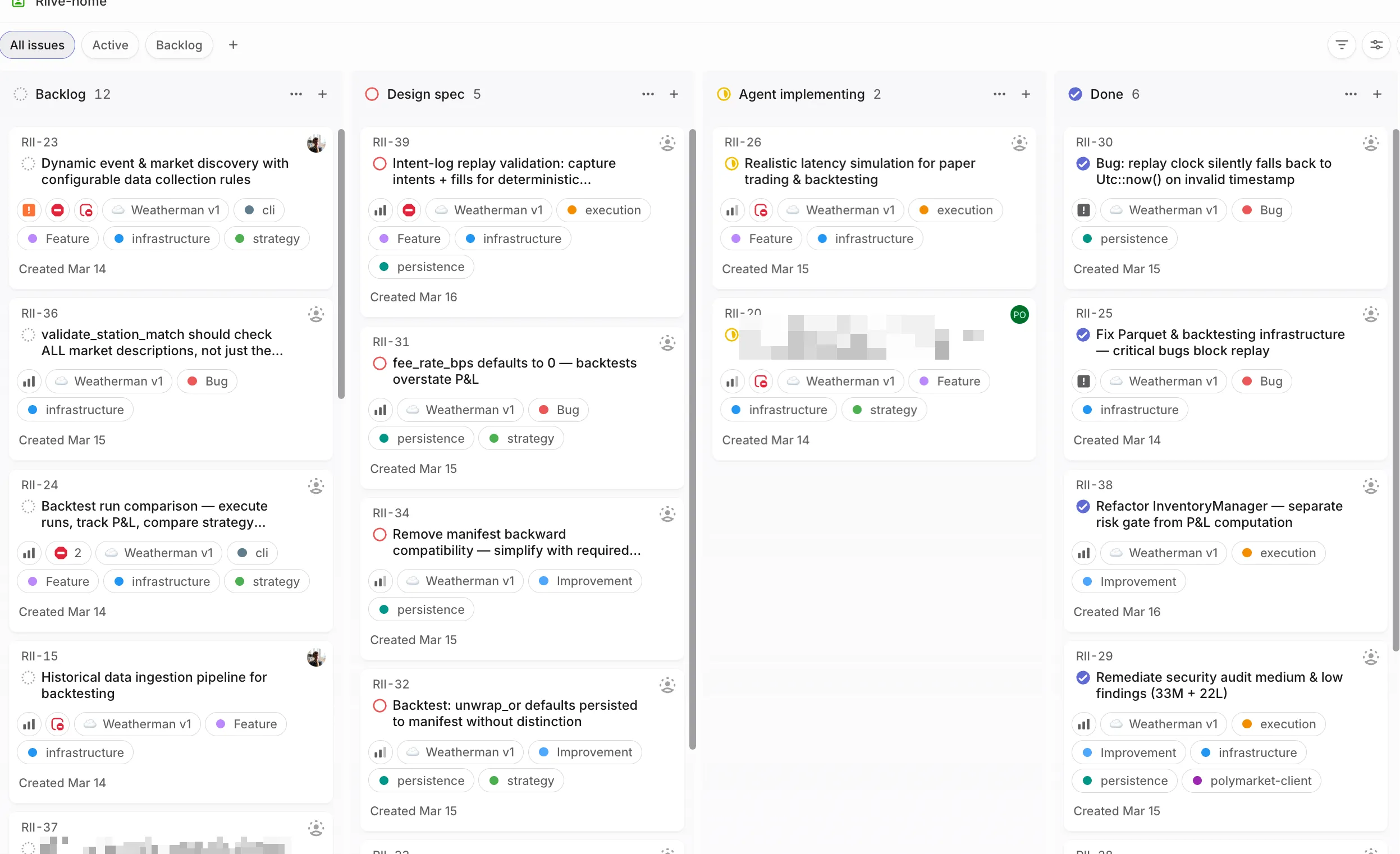

3 sessions running, 3 features in flight. Linear board updating itself. I spend most of my day reading markdowns and reviewing diffs — the actual coding part is the part I don’t do anymore. When I step away, agents keep working. When I come back, there’s a PR ready. It’s genuinely great. Also genuinely unhinged when you say it out loud to someone who doesn’t know what any of this means.

Part 1 got you started. Part 2 taught you the context game. Now let’s put it all together.

The artifact pipeline

If you take one thing from this entire series: specs before code.

Without it you get slop, enshittification, and 6k lines in the bin. With it you build production apps in languages you can’t even read.

Instead of prompting an AI to “build me X”, you create a chain of markdown artifacts where each one feeds the next:

Each phase produces a markdown file. Each phase gets a fresh context window. The artifact is the compressed knowledge that carries forward. This is the whole game.

Phase 1: Research

Dedicated session to research the problem space. Output is a markdown — how the relevant modules work, data flows, constraints.

I use a custom /research command — spawns 4 subagents in parallel, synthesizes findings into a structured markdown:

research_codebase.md — the full research command view source ↗

---

description: Document codebase as-is with comprehensive research

model: opus

---

# Research Codebase

You are tasked with conducting comprehensive research across the codebase to answer user questions by spawning parallel sub-agents and synthesizing their findings.

## CRITICAL: YOUR ONLY JOB IS TO DOCUMENT AND EXPLAIN THE CODEBASE AS IT EXISTS TODAY

- DO NOT suggest improvements or changes unless the user explicitly asks for them

- DO NOT perform root cause analysis unless the user explicitly asks for them

- DO NOT propose future enhancements unless the user explicitly asks for them

- DO NOT critique the implementation or identify problems

- DO NOT recommend refactoring, optimization, or architectural changes

- ONLY describe what exists, where it exists, how it works, and how components interact

- You are creating a technical map/documentation of the existing system

## Initial Setup:

When this command is invoked, respond with:

```

I'm ready to research the codebase. Please provide your research question or area of interest, and I'll analyze it thoroughly by exploring relevant components and connections.

```

Then wait for the user's research query.

## Steps to follow after receiving the research query:

1. **Read any directly mentioned files first:**

- If the user mentions specific files (docs, configs, TOML, JSON), read them FULLY first

- **IMPORTANT**: Use the Read tool WITHOUT limit/offset parameters to read entire files

- **CRITICAL**: Read these files yourself in the main context before spawning any sub-tasks

- This ensures you have full context before decomposing the research

2. **Analyze and decompose the research question:**

- Break down the user's query into composable research areas

- Take time to ultrathink about the underlying patterns, connections, and architectural implications the user might be seeking

- Identify specific components, patterns, or concepts to investigate

- Create a research plan using TodoWrite to track all subtasks

- Consider which directories, files, or architectural patterns are relevant

- Pay special attention to Rust-specific structures: crates, modules, traits, impls, derive macros, feature flags

3. **Spawn parallel sub-agent tasks for comprehensive research:**

- Create multiple Task agents to research different aspects concurrently

- We now have specialized agents that know how to do specific research tasks:

**For codebase research:**

- Use the **codebase-locator** agent to find WHERE files and components live

- Look for `Cargo.toml`, `lib.rs`, `main.rs`, `mod.rs` files to understand crate/module structure

- Search `*.rs` files, `build.rs`, `.cargo/config.toml`

- Use the **codebase-analyzer** agent to understand HOW specific code works (without critiquing it)

- Focus on trait definitions, impl blocks, type aliases, error types, and module hierarchies

- Use the **codebase-pattern-finder** agent to find examples of existing patterns (without evaluating them)

- Look for common Rust patterns: builder pattern, newtype pattern, From/Into impls, error handling patterns, async patterns

**IMPORTANT**: All agents are documentarians, not critics. They will describe what exists without suggesting improvements or identifying issues.

**For web research (use when external context would help):**

- Use the **web-search-researcher** agent for external documentation, crate docs, RFCs, blog posts, and resources

- Spawn web research agents proactively when the topic involves external crates, Rust language features, or ecosystem patterns

- Instruct them to return LINKS with their findings, and please INCLUDE those links in your final report

The key is to use these agents intelligently:

- Start with locator agents to find what exists

- Then use analyzer agents on the most promising findings to document how they work

- Run multiple agents in parallel when they're searching for different things

- Each agent knows its job - just tell it what you're looking for

- Don't write detailed prompts about HOW to search - the agents already know

- Remind agents they are documenting, not evaluating or improving

4. **Wait for all sub-agents to complete and synthesize findings:**

- IMPORTANT: Wait for ALL sub-agent tasks to complete before proceeding

- Compile all sub-agent results

- Connect findings across different crates, modules, and components

- Include specific file paths and line numbers for reference

- Highlight patterns, connections, and architectural decisions

- Answer the user's specific questions with concrete evidence

- Document trait relationships, generic type parameters, and lifetime annotations where relevant

5. **Generate research document:**

- Structure the document with content:

```markdown

# Research: [User's Question/Topic]

**Date**: [Current date and time]

**Git Commit**: [Current commit hash]

**Branch**: [Current branch name]

## Research Question

[Original user query]

## Summary

[High-level documentation of what was found, answering the user's question by describing what exists]

## Crate & Module Structure

[Workspace layout, crate dependencies, module tree relevant to the research]

## Detailed Findings

### [Component/Area 1]

- Description of what exists (file.rs:line)

- How it connects to other components

- Current implementation details (without evaluation)

- Key traits, types, and impls involved

### [Component/Area 2]

...

## Code References

- `path/to/file.rs:123` - Description of what's there

- `another/module/mod.rs:45-67` - Description of the code block

## Architecture Documentation

[Current patterns, conventions, and design implementations found in the codebase]

- Error handling approach (thiserror, anyhow, custom Result types)

- Async runtime usage (tokio, async-std, etc.)

- Serialization patterns (serde derives, custom impls)

- Feature flag organization

## Open Questions

[Any areas that need further investigation]

```

6. **Add GitHub permalinks (if applicable):**

- Check if on main branch or if commit is pushed: `git branch --show-current` and `git status`

- If on main/master or pushed, generate GitHub permalinks:

- Get repo info: `gh repo view --json owner,name`

- Create permalinks: `https://github.com/{owner}/{repo}/blob/{commit}/{file}#L{line}`

- Replace local file references with permalinks in the document

7. **Present findings:**

- Present a concise summary of findings to the user

- Include key file references for easy navigation

- Ask if they have follow-up questions or need clarification

8. **Handle follow-up questions:**

- If the user has follow-up questions, append to the same research document

- Add a new section: `## Follow-up Research [timestamp]`

- Spawn new sub-agents as needed for additional investigation

## Important notes:

- Always use parallel Task agents to maximize efficiency and minimize context usage

- Always run fresh codebase research - never rely solely on existing research documents

- Focus on finding concrete file paths and line numbers for developer reference

- Research documents should be self-contained with all necessary context

- Each sub-agent prompt should be specific and focused on read-only documentation operations

- Document cross-component connections and how systems interact

- Link to GitHub when possible for permanent references

- Keep the main agent focused on synthesis, not deep file reading

- Have sub-agents document examples and usage patterns as they exist

- **CRITICAL**: You and all sub-agents are documentarians, not evaluators

- **REMEMBER**: Document what IS, not what SHOULD BE

- **NO RECOMMENDATIONS**: Only describe the current state of the codebase

- **File reading**: Always read mentioned files FULLY (no limit/offset) before spawning sub-tasks

- **Rust-specific guidance**:

- Explore `Cargo.toml` and `Cargo.lock` for dependency graphs

- Map out workspace members when in a workspace

- Document `pub` visibility boundaries between modules

- Note `#[cfg(...)]` conditional compilation and feature gates

- Track `use` imports to understand module dependency flow

- Identify key derive macros and proc macros in use

- Document unsafe blocks and their safety invariants

- Note any FFI boundaries (`extern "C"`, `#[no_mangle]`)

- **Critical ordering**: Follow the numbered steps exactly

- ALWAYS read mentioned files first before spawning sub-tasks (step 1)

- ALWAYS wait for all sub-agents to complete before synthesizing (step 4)

- NEVER write the research document with placeholder values

And here’s a real output — research artifact from a trading bot project:

Example research artifact: TS Strategy ↔ Deno Runner relationship view source ↗

# Research: TS Strategy ↔ Deno Runner ↔ Session Runner Relationship

**Date**: 2026-03-12

**Git Commit**: 2d0cf45

**Branch**: main

## Research Question

How does the relationship between the TypeScript strategy and the Deno strategy runner look? Can a strategy itself declare which weather data sources (if any) it wants? Will the session runner respect that?

## Summary

Strategies are TypeScript files that run inside an embedded Deno (V8) runtime on the Rust side. Each strategy **must** declare a manifest via `ops.declareManifest({ feeds: [...] })` during `onInit`, specifying which data feeds it needs (`"book"`, `"weather"`, or both). The Rust session runner reads this manifest and respects it: events for undeclared feeds are short-circuited with empty output, never reaching the strategy's `onTick`.

---

## Architecture: Three-Layer Stack

```

┌─────────────────────────────────────────────────────┐

│ TypeScript Strategy (.ts file) │

│ Exports: onInit, onTick, onFill, onShutdown │

│ Calls: ops.declareManifest(), ops.submitIntent() │

└───────────────────┬─────────────────────────────────┘

│ serde_json serialization

┌───────────────────▼─────────────────────────────────┐

│ strategy-runtime crate (Deno embedding) │

│ DenoStrategy: transpile TS→JS, run in V8 │

│ Exposes: on_tick(), on_fill(), manifest() │

│ ops.rs: op_log, op_submit_intent, │

│ op_declare_manifest, op_store_return │

└───────────────────┬─────────────────────────────────┘

│

┌───────────────────▼─────────────────────────────────┐

│ weatherman crate (Session runner) │

│ session_init.rs: initialize_core() │

│ session_core.rs: SessionCore::process_event() │

│ session.rs: tokio::select! event loop │

└─────────────────────────────────────────────────────┘

```

---

## Layer 1: TypeScript Strategy

**Location**: `strategies/` directory

### File Layout

```

strategies/

├── lib/

│ ├── types.d.ts # IDE type declarations (TickContext, Intent, StrategyManifest, etc.)

│ ├── kelly.ts # Kelly criterion library

│ └── ensemble.ts # Ensemble analysis helpers

├── registry/

│ ├── london-weather-brackets/

│ │ ├── v1.ts

│ │ └── v2.ts # Weather + book strategy

│ └── negrisk-sum-arb/

│ └── v1.ts # Book-only strategy (no weather)

├── test-echo.ts # Test: both feeds

├── test-book-only.ts # Test: book feed only

├── test-weather-only.ts # Test: weather feed only

└── test-no-manifest.ts # Test: no manifest (should fail)

```

### Strategy API Contract

Every strategy must export `onInit(config)` and `onTick(ctx)`. Optional exports: `onMarketsReady(markets)`, `onFill(ctx, fill)`, `onShutdown()`.

**Manifest declaration** (required in `onInit`):

```typescript

export function onInit(cfg: Record<string, unknown>): StrategyParams {

ops.declareManifest({ feeds: ["weather", "book"] });

// ...

return { ensembleIqrThreshold: 2.0 };

}

```

The `StrategyManifest` type (`types.d.ts:133-135`):

```typescript

interface StrategyManifest {

feeds: ("book" | "weather")[];

}

```

**Intent submission** — strategies emit intents via `ops.submitIntent()`:

```typescript

ops.submitIntent({

kind: "order", // or "mint" or "merge"

marketId: "...",

outcome: "YES",

side: "buy",

qty: 10,

limitPx: 65,

tif: "GTC",

reason: "...",

});

```

**Tick context differentiation** — strategies distinguish weather ticks from book ticks by checking `ctx.weather`:

```typescript

export function onTick(ctx: TickContext): void {

if (ctx.weather) {

handleWeatherRefresh(ctx); // Weather data present

} else {

handleBookUpdate(ctx); // Pure book update tick

}

}

```

### `onInit` Return Value

`onInit` can optionally return a `StrategyParams` object to tune Rust-side ensemble parameters:

```typescript

interface StrategyParams {

ensembleIqrThreshold?: number;

ensembleBimodalityPenalty?: number;

}

```

These are captured by `DenoStrategy` and used by `SessionCore::fetch_weather_state()` when computing bracket probabilities (`session_core.rs:340-341`).

### Real Strategy Examples

| Strategy | Feeds | Purpose |

|----------|-------|---------|

| `london-weather-brackets/v2.ts` | `["weather", "book"]` | Weather-driven bracket trading with book depth monitoring |

| `negrisk-sum-arb/v1.ts` | `["book"]` | Pure book arbitrage, no weather needed |

| `test-book-only.ts` | `["book"]` | Test fixture for book-only feed |

| `test-weather-only.ts` | `["weather"]` | Test fixture for weather-only feed |

---

## Layer 2: Deno Strategy Runtime

**Crate**: `crates/strategy-runtime/`

**Dependencies**: `deno_core 0.390`, `deno_ast 0.53` (transpiling)

### DenoStrategy Lifecycle (`deno_strategy.rs`)

1. **`DenoStrategy::new(source_path, config)`** (`deno_strategy.rs:107-215`):

- Reads the `.ts` file from disk

- Transpiles TS→JS via `deno_ast` with content-addressed caching (`transpile.rs`)

- Creates a `JsRuntime` with the `strategy_ext` Deno extension (custom ops)

- Bootstraps `globalThis.ops` (log, submitIntent, declareManifest) and removes `globalThis.Deno` for sandboxing

- Loads the strategy as an ES module via `StrategyModuleLoader` (which sandboxes imports to `file://` within the strategies directory)

- Calls `onInit(config)` and captures the return value as `StrategyParams`

- The `StrategyManifest` is stored in Deno's `OpState` by `op_declare_manifest`

2. **`manifest()`** (`deno_strategy.rs:266-270`):

- Returns the `StrategyManifest` from `OpState` (set during `onInit` via `ops.declareManifest()`)

- If strategy never called `declareManifest`, returns `StrategyManifest::default()` (empty feeds)

3. **`on_tick(ctx)`** (`deno_strategy.rs:217-234`):

- Clears the intent collector in `OpState`

- Serializes `TickContext` to JSON and calls `globalThis.__strategy.onTick(ctx)`

- Drains and returns collected `Vec<Intent>`

4. **`on_fill(ctx, fill)`** (`deno_strategy.rs:236-247`):

- Calls `globalThis.__strategy.onFill(ctx, fill)` if exported

### Ops Bridge (`ops.rs`)

Four ops are registered in the `strategy_ext` Deno extension:

| Op | Purpose |

|----|---------|

| `op_log(level, message)` | Route strategy logs to Rust tracing |

| `op_submit_intent(intent)` | Collect an `Intent` into `Vec<Intent>` in OpState |

| `op_declare_manifest(manifest)` | Store `StrategyManifest` in OpState |

| `op_store_return(json)` | Capture return value from `onInit` |

### Module Loader & Sandboxing (`module_loader.rs`)

- Only `file://` imports are allowed (rejects `https://`, `data:`, etc.)

- Path-traversal protection: resolved imports must be within the strategies base directory (uses `canonicalize()`)

- `.ts` files are transparently transpiled via the `TranspileCache`

### Transpile Cache (`transpile.rs`)

- Content-addressed: SHA-256 of source text → cache key

- Two-tier: in-memory HashMap + disk cache with integrity checksums

- Disk cache uses domain-separated SHA-256 signatures (`weatherman-transpile-v1:`)

### Book Buffer (shared Float64Array)

Strategies that declare `"book"` in their feeds get access to `globalThis.books`, a typed accessor over a shared `Float64Array`. Layout per bracket (stride 7):

```

[bid, ask, bidQty, askQty, timestamp, bidDepthUsd, askDepthUsd]

```

Initialized by `init_book_buffer(num_brackets)` and updated by `update_book_buffer()` on the Rust side.

---

## Layer 3: Session Runner (Rust)

### Initialization (`session_init.rs:181-275`)

`initialize_core()` performs these steps:

1. **Create strategy**: `DenoStrategy::new(strategy_path, strategy_config)` — this runs `onInit`, which sets the manifest

2. **Validate manifest**: `strategy.manifest()` — **fails the session if feeds are empty** (line 210-223):

```rust

if manifest.feeds.is_empty() {

error!("strategy did not call ops.declareManifest()...");

return Err(SessionResult { ... });

}

```

3. **Init book buffer** (only if `wants_book`): `strategy.init_book_buffer(market_bindings.len())`

4. **Call onMarketsReady**: passes static market metadata to the strategy

5. **Build SessionCore**: stores `wants_book` and `wants_weather` flags

### SessionCore: Event Processing (`session_core.rs:119-193`)

`process_event()` is the central dispatch. It respects the manifest at two levels:

**WeatherTick events** (line 129-151):

```rust

SessionEvent::WeatherTick => {

if !self.wants_weather {

return Ok(TickOutput { intents: vec![], weather_state: None });

}

// fetch weather, update book buffer, build TickContext with weather, call on_tick

}

```

**BookUpdate events** (line 153-192):

```rust

SessionEvent::BookUpdate { .. } => {

if !self.wants_book {

return Ok(TickOutput { intents: vec![], weather_state: None });

}

// update single bracket in book buffer, build TickContext without weather, call on_tick

}

```

Key: `wants_book` and `wants_weather` are derived from the manifest in `SessionCore::new()` (line 91-92):

```rust

let wants_book = strategy.manifest().wants_book();

let wants_weather = strategy.manifest().wants_weather();

```

### Live Session Event Loop (`session.rs:671+`)

The `tokio::select!` loop has three branches:

1. **Time-based exit deadline** — fires once if configured

2. **Weather timer** (`interval.tick()`) — fires on the configured interval (e.g., every 5 minutes). Calls `core.process_event(SessionEvent::WeatherTick, ...)`. Even though this branch fires for all strategies, `SessionCore::process_event` short-circuits to empty output if the strategy didn't declare `"weather"`.

3. **Book update** (`book_event_rx`) — fires on WebSocket book updates. Calls `core.process_event(SessionEvent::BookUpdate { ... }, ...)`. Similarly short-circuits if strategy didn't declare `"book"`.

**Important**: The weather timer always ticks (it also serves as the market-closure polling mechanism), but the actual weather fetch and strategy callback only happen if `wants_weather` is true.

---

## The Manifest Type (Rust side)

**Location**: `crates/types/src/lib.rs:1193-1217`

```rust

#[derive(Debug, Clone, Serialize, Deserialize)]

#[serde(rename_all = "lowercase")]

pub enum Feed {

Book,

Weather,

}

#[derive(Debug, Clone, Default, Serialize, Deserialize)]

#[serde(rename_all = "camelCase")]

pub struct StrategyManifest {

#[serde(default)]

pub feeds: Vec<Feed>,

}

impl StrategyManifest {

pub fn wants_book(&self) -> bool { self.feeds.contains(&Feed::Book) }

pub fn wants_weather(&self) -> bool { self.feeds.contains(&Feed::Weather) }

}

```

---

## Answer to the Questions

### 1. How does the relationship between the TS strategy and the Deno runner look?

The TS strategy is a plain ES module file. The Rust `strategy-runtime` crate:

- Transpiles it to JS via `deno_ast`

- Runs it inside an embedded V8 via `deno_core::JsRuntime`

- Exposes `globalThis.ops` (log, submitIntent, declareManifest) as the strategy's API surface

- Calls exported functions (`onInit`, `onTick`, `onFill`, `onShutdown`, `onMarketsReady`) at the appropriate lifecycle points

- Collects `Intent` objects from `ops.submitIntent()` calls and returns them to Rust

The strategy has no direct access to Deno APIs, network, or filesystem. It communicates entirely through the ops bridge and receives data via serialized JSON arguments.

### 2. Can the strategy declare which weather data sources it wants?

**Yes.** Every strategy declares its feeds via `ops.declareManifest({ feeds: [...] })` in `onInit`. The available feed types are `"book"` and `"weather"`. A strategy can declare:

- `["book"]` — book updates only (e.g., `negrisk-sum-arb`)

- `["weather"]` — weather updates only

- `["weather", "book"]` — both (e.g., `london-weather-brackets`)

- Empty feeds → session initialization **fails** with an error

Additionally, the strategy's `onInit` return value (`StrategyParams`) can tune the Rust-side weather processing parameters (`ensembleIqrThreshold`, `ensembleBimodalityPenalty`).

However, the strategy **cannot** select specific weather models or data providers. The models fetched (GFS Seamless, ECMWF IFS025 deterministic, ECMWF IFS025 ensemble) are hardcoded in `SessionCore::fetch_weather_state()` (`session_core.rs:302-338`).

### 3. Will the session runner respect the manifest?

**Yes, fully.** The manifest is enforced at two levels:

1. **`session_init.rs`**: Empty manifest (no `declareManifest()` call) → session fails to start

2. **`session_core.rs`**: `process_event()` checks `wants_book` / `wants_weather` and short-circuits undeclared event types to `TickOutput { intents: vec![], weather_state: None }`

This means:

- A book-only strategy never triggers weather API calls

- A weather-only strategy ignores all WebSocket book updates

- Both branches always fire in the `tokio::select!` loop, but the SessionCore gate prevents unnecessary work

## Code References

- `strategies/lib/types.d.ts` — TypeScript type declarations for the strategy API

- `crates/strategy-runtime/src/deno_strategy.rs:107-215` — DenoStrategy::new lifecycle

- `crates/strategy-runtime/src/deno_strategy.rs:266-270` — manifest() accessor

- `crates/strategy-runtime/src/ops.rs:24-29` — op_declare_manifest

- `crates/types/src/lib.rs:1193-1217` — Feed enum, StrategyManifest, wants_book/wants_weather

- `crates/weatherman/src/session_init.rs:208-232` — manifest validation (empty feeds → error)

- `crates/weatherman/src/session_core.rs:79-105` — SessionCore::new captures wants_book/wants_weather

- `crates/weatherman/src/session_core.rs:119-193` — process_event short-circuits on undeclared feeds

- `crates/weatherman/src/session_core.rs:299-393` — fetch_weather_state (hardcoded models)

- `crates/weatherman/src/session.rs:671-900` — tokio::select! event loop

- `strategies/registry/negrisk-sum-arb/v1.ts:69` — book-only manifest example

- `strategies/registry/london-weather-brackets/v2.ts:179` — weather+book manifest example

You’re not implementing anything yet. Just having Claude read the codebase and explain it back to you.

Phase 2: Design doc

Feed the research to a fresh session. Brainstorm a design doc — success criteria, component overview, current vs desired state, gotchas, test cases.

Here’s a real one — P2P chunk fetching for a blockchain node. It’s long af but that’s the point:

Example design doc: PD Chunk P2P Pull view source ↗

# PD Chunk P2P Pull — Design Specification

**Date**: 2026-03-13

**Branch**: rob/pd-chunk-gossiping (off feat/pd)

**Status**: Draft

## Problem Statement

The PD (Programmable Data) service manages an in-memory chunk cache that the EVM's PD precompile reads from during transaction execution. Today, PdService is local-only: it loads chunks exclusively from the node's own StorageModules via `ChunkStorageProvider`. When a chunk is not available locally, two things break:

1. **Block validation**: A peer's block containing PD transactions is rejected as invalid. `shadow_transactions_are_valid` sends `ProvisionBlockChunks` to PdService, which responds `Err(missing)`. The block fails with `ValidationError::ShadowTransactionInvalid` — permanently, with no retry. This means a node that doesn't store the relevant data partition cannot validate blocks with PD transactions.

2. **Block building**: When a PD transaction enters the Reth mempool, `pd_transaction_monitor` fires `NewTransaction` exactly once. PdService marks the tx as `PartiallyReady` if chunks are missing. The tx is never added to `ready_pd_txs`, so the payload builder's `CombinedTransactionIterator` silently skips it on every block build attempt. There is no mechanism to re-attempt provisioning — the tx sits in `PartiallyReady` until Reth evicts it from the mempool.

Both paths need PdService to be able to fetch missing chunks from peers over the network.

## Design Decisions and Alternatives Considered

### Decision 1: Where does the blocking await happen?

**Chosen: CL/Irys side, in `shadow_transactions_are_valid`**

The block validation pipeline has two sides: the Irys consensus layer (CL) and the Reth execution layer (EL). The question was whether to block waiting for chunks on the CL side before submitting to Reth, or on the Reth side during EVM execution.

**Why not Reth side**: The PD precompile runs inside `IrysEvm::transact_raw` — fully synchronous EVM execution. `PdContext::get_chunk()` does a lock-free `DashMap::get()` and immediately returns `Ok(None)` if the chunk is missing, which causes `PdPrecompileError::ChunkNotFound`, the transaction reverts, and Reth marks the block as `PayloadStatusEnum::Invalid`. There is no async machinery inside Reth's block executor to await network fetches. Injecting one would require fundamental changes to Reth's execution model.

**Why CL side works**: `shadow_transactions_are_valid` already contains an unbounded `.await` for `wait_for_payload` (fetching EVM execution payloads from peers, with up to 10 retries at 5-second intervals). The concurrent validation `JoinSet` has no concurrency cap — blocking one block's validation task doesn't stall others. And `exit_if_block_is_too_old` races against `validate_block`, cancelling it if the tip advances more than `block_tree_depth` (50) blocks ahead, providing a natural safety net.

**Block building is unaffected**: The payload builder already gates PD tx inclusion via `ready_pd_txs.contains()`. Only transactions whose chunks are fully provisioned are included. No blocking is needed or desired in the block building path.

### Decision 2: Timeout vs. auto-cancellation

**Chosen: No explicit timeout — rely on the validation service's existing cancellation mechanism**

The validation pipeline already has `exit_if_block_is_too_old`, which races against `validate_block` via `futures::future::select`. If the canonical tip advances more than `block_tree_depth` blocks beyond the block being validated, the validation task is cancelled with `ValidationError::ValidationCancelled`. This provides a natural upper bound on how long a fetch can block.

Adding an explicit timeout would be redundant and would require choosing an arbitrary duration. The auto-cancellation mechanism is adaptive — it fires based on chain progress, not wall-clock time.

**Implementation constraint: `exit_if_block_is_too_old` is progress-based and only fires when the tip advances.** If the network is stalled (no new blocks) and peers do not serve the chunk, validation can hang indefinitely while fetch rounds keep retrying. As defense-in-depth, PdService enforces a `MAX_CHUNK_FETCH_RETRIES` per chunk key. When exceeded, the chunk is failed permanently and the block validation completes with an error. This bounds retry behavior even when the progress-based cancellation cannot fire. See the `on_fetch_done` retry path for the implementation.

PdService implements a retry loop for failed fetches: on failure, it retries with a different assigned peer via a `DelayQueue`-based backoff mechanism. The loop continues until either all chunks arrive, the request is cancelled (block validation cancelled or tx removed from mempool), or `MAX_CHUNK_FETCH_RETRIES` is exceeded.

### Decision 3: Push vs. Pull for missing PD chunks

**Chosen: Pull (request-response)**

The `feat-pd-chunks` branch contains WIP work on push-based PD chunk gossip (broadcast to all `VersionPD` peers). We chose pull instead for the following reasons:

- **Efficiency**: Push broadcasts chunks to all peers regardless of whether they need them. Pull requests only the specific chunks that are missing, from peers that are likely to have them.

- **Latency**: A node validating a block or building a block needs specific chunks now. Waiting for a peer to happen to push them is non-deterministic. Pull gives the requesting node control over timing.

- **Bandwidth**: PD chunks are 256KB each. Broadcasting every PD chunk to every peer is expensive. Pull is targeted.

- **Simplicity**: The gossip pull mechanism (`/gossip/v2/pull_data`) already exists with peer authentication, handshake, and score tracking. Adding a new request variant is minimal work.

Push-based gossip may be added later as an optimization (proactive replication), but pull is the necessary foundation for correctness.

### Decision 4: Reuse DataSyncService vs. PdService owns fetching

**Chosen: PdService owns fetching directly**

DataSyncService was evaluated as a candidate for reuse since it already pulls chunks from peers. The analysis revealed deep structural incompatibilities:

**Coupling to StorageModules**: DataSyncService's `ChunkOrchestrator` requires a local `StorageModule` instance. `ChunkOrchestrator::new` panics without a `partition_assignment`. Queue population reads SM entropy intervals. Throttling reads SM disk throughput. PD chunk requests are for arbitrary `(ledger, offset)` pairs that may not correspond to any local StorageModule.

**Peer selection by slot**: `get_best_available_peers` filters peers by `(ledger_id, slot_index)` matching a local SM's assignment. PD chunks need peers selected by the partition that contains the requested offset — a different lookup entirely.

**Completion path hardwired to SM writes**: `on_chunk_completed` unconditionally calls `sm.write_data_chunk()` and falls back to `ChunkIngressService`. PD chunks need to go into PdService's `ChunkCache` and `ChunkDataIndex` DashMap, not into StorageModules.

**Tick-based, not responsive**: DataSyncService operates on a 250ms tick cycle. PD chunk fetching for block validation needs immediate dispatch — a validation task is blocking on a oneshot response.

**Packed chunks only**: The `GET /v1/chunk/ledger/{id}/{offset}` API endpoint returns `ChunkFormat::Packed` (XOR-encrypted data). PD chunks need unpacked bytes. Adding unpacking adds latency and CPU load.

**Cross-contamination**: Sharing `PeerBandwidthManager` would mix SM sync health scores with PD fetch results, distorting both.

**Priority contention**: DataSyncService uses `UnpackingPriority::Background`. PD chunk fetching is latency-sensitive (a block validation is waiting). Sharing the unpacking queue could delay PD responses.

**Why PdService is the right owner**:

- PdService already tracks exactly which chunks are missing per tx (`TxProvisioningState.missing_chunks`)

- PdService needs to atomically transition `PartiallyReady -> Ready` and update `ready_pd_txs` + `ChunkDataIndex`

- Adding an intermediary service would be a pass-through that complicates the state machine without adding value

### Decision 5: Concurrency model within PdService

**Chosen: Structured concurrency with JoinSet owned by PdService**

PdService is currently a single-threaded actor — its `select!` loop processes messages synchronously. Three options were considered for adding async chunk fetching:

**(A) Spawn-and-callback via channel**: Spawn detached tokio tasks for HTTP fetches, each sending results back via a new internal channel. PdService's `select!` loop gains a second arm. Follows the DataSyncService pattern.

**(B) Dedicated fetcher service**: A separate long-lived `PdChunkFetcherService` task owns the HTTP client. PdService sends fetch requests, receives results. More isolation but adds cross-service coordination.

**(C) JoinSet / FuturesUnordered owned by PdService** (chosen): PdService owns a `JoinSet<PdChunkFetchResult>`. The `select!` loop polls `join_set.join_next()` alongside the message channel. This follows the same pattern as `ValidationCoordinator` which uses `JoinSet<ConcurrentValidationResult>`.

**Why JoinSet**:

- No extra channel needed — results come back directly typed

- Structured concurrency — tasks are owned by the service, automatically cancelled on shutdown when the JoinSet drops

- Clean integration with `tokio::select!` via `join_next()`

- Precedent in the codebase (`ValidationCoordinator.concurrent_tasks`)

### Decision 6: Transport mechanism for pulling chunks

**Chosen: Public HTTP API (primary) with gossip pull fallback**

Four options were considered:

**(A) Fetch packed via existing API endpoint**: Reuse `GET /v1/chunk/ledger/{id}/{offset}`. No API changes, but returns packed (XOR-encrypted) data without `tx_path`. PdService would need to send unpacking requests to the packing service, adding latency and CPU load on the requesting node. No way to verify chunk is bound to the correct ledger position.

**(B) New unpacked-only API endpoint**: Add `GET /v1/chunk/ledger/{id}/{offset}/unpacked`. The serving node always unpacks before sending. Shifts CPU cost to the data holder on every request — unpacking is expensive (entropy recomputation via C/CUDA). Does not benefit from gossip infrastructure.

**(C) Gossip pull only**: Add `PdChunk(u32, u64)` to `GossipDataRequestV2`. Leverages gossip infrastructure (peer auth, scoring, circuit breakers). However, requires gossip handshake authentication — unstaked peers (validator-only nodes) may be unable to initiate pull requests.

**(D) Existing public HTTP API with gossip fallback** (chosen): Reuse the existing `GET /v1/chunk/ledger/{id}/{offset}` endpoint as-is — no API changes. The endpoint always returns `ChunkFormat::Packed`. The requesting node unpacks locally. If the public API fails (peer offline, network error), PdService falls back to gossip pull via `GossipDataRequestV2::PdChunk`. Chunk authenticity is verified locally by the requester via `data_root` derivation from its own MDBX (see Decision 8).

**Why existing public API primary**:

- **Zero API changes**: No new endpoints, no modified responses, fully backwards compatible with all existing nodes

- **No authentication barrier**: Public API requires no gossip handshake or staking verification. Unstaked validator-only nodes can fetch chunks without special configuration.

- **Follows DataSyncService precedent**: `DataSyncService` already fetches chunks via this exact endpoint. This is the established pattern for chunk retrieval.

- **HTTP caching friendly**: Can benefit from future caching infrastructure (reverse proxy, CDN)

**Why gossip fallback**:

- Gossip pull provides an authenticated fallback with peer scoring and circuit breakers

- Useful when the public API is unreachable but the gossip connection is established

- Reuses existing `/gossip/v2/pull_data` infrastructure

**Receiver-side unpacking**: The existing endpoint always returns `ChunkFormat::Packed`. PdService unpacks locally using `irys_packing::unpack()` with entropy derived from `(packing_address, partition_hash, chunk_offset, chain_id)`. The `PackedChunk` struct carries `packing_address` and `partition_hash`, so the receiver has all necessary inputs. The existing `block_validation.rs` already handles packed chunks in its fetch path — this is an established pattern.

**TODO: Future optimization — serve unpacked when cached.** The serving node's MDBX `CachedChunks` table (populated by `ChunkIngressService`) contains unpacked versions of recently-ingested chunks. A future update should extend the endpoint (or add a new one with a query parameter like `?format=auto`) to return `ChunkFormat::Unpacked` when available, avoiding unnecessary unpacking on the receiver. This change requires a backwards-compatible wire format (e.g., new endpoint or opt-in parameter) since existing consumers expect `ChunkFormat::Packed`.

### Decision 7: Peer selection strategy

**Chosen: Targeted by partition assignment**

Three strategies were considered:

**(A) Dumb fan-out**: Ask top N active peers, first to respond wins. Simple but wastes requests to peers that don't have the data.

**(B) Targeted by partition assignment** (chosen): Compute the partition from the ledger offset using `partition_index = offset / num_chunks_in_partition`. Look up assigned miners in `canonical_epoch_snapshot().partition_assignments.data_partitions` for the relevant `(ledger_id, slot_index)`. Resolve those miners to gossip addresses via `PeerList`. Request chunks from those specific peers.

**(C) Targeted with fallback**: Try assigned miners first, fall back to fan-out. More robust but more complex.

**Why targeted**: PD chunks live at specific ledger offsets that map deterministically to partition slots. The epoch snapshot's partition assignments tell us exactly which miners are responsible for storing that data. Requesting from those miners is both efficient (high hit rate) and fast (no wasted requests).

### Decision 8: Validation of pulled chunks

**Chosen: Local `data_root` derivation + `data_path` verification**

Gossip handshake authentication (signature + staking check) provides admission control but not consensus-critical correctness. A Byzantine or compromised staker can return a valid chunk from a different ledger position — it would pass `data_path` validation against its own `data_root` but deliver wrong data for the requested offset. Since PD execution feeds directly into EVM state transitions, the requesting node must verify that the returned chunk is bound to the correct `(ledger, offset)`.

The requester derives the expected `data_root` locally from its own canonical MDBX state — no server-supplied proof (`tx_path`) is needed:

```

ledger_offset → BlockIndex::get_block_bounds() → block height

→ block_header_by_hash() → block header with ordered tx IDs

→ get_data_ledger_tx_ids_ordered(ledger) → ordered tx IDs

→ tx_header_by_txid() for each → accumulate data_size.div_ceil(chunk_size)

→ find the DataTransactionHeader that contains the target offset

→ expected_data_root = tx.data_root (from local trusted DB)

→ validate_path(expected_data_root, data_path, chunk_byte_offset) → proves chunk membership

→ SHA256(unpacked_bytes) == leaf_hash → binds raw data to proof

```

This local derivation is cryptographically equivalent to the `tx_path` proof chain used in PoA validation (`block_validation.rs:1194`) — both establish the binding from `(ledger, offset)` → `data_root`. The difference is that instead of the server providing a merkle proof (`tx_path`) from `tx_root` to `data_root`, the requester looks up the `data_root` directly from its local MDBX. Since `persist_block()` writes block headers, tx headers, and BlockIndex entries atomically in one MDBX transaction (`block_migration_service.rs:242-296`), if `get_block_bounds()` succeeds, the tx headers are guaranteed to be present. Similar DB-loading logic already exists in `load_data_transactions()` (`block_tree.rs:1518`).

**Why local derivation over server-supplied `tx_path`**:

- **Simpler wire protocol**: The server returns just the chunk data (packed or unpacked), no proof

- **No extra server-side work**: The serving node doesn't need to read `tx_path` from SM submodule DB

- **Reuses existing infrastructure**: `get_block_bounds()`, `block_header_by_hash()`, `tx_header_by_txid()` are all existing methods

- **All nodes have the data**: `persist_block()` runs on ALL nodes unconditionally (no role/pledge/miner gating) — validator-only nodes have the same MDBX state

- **Same trust model**: Both `tx_root` (from BlockIndex) and `data_root` (from tx headers) come from the same canonical migrated state

**BlockIndex availability guarantee**: The BlockIndex always has the committing block when a PD tx references that offset. `persist_block()` atomically writes the block header, tx headers, and BlockIndex entry. Only afterward does `ChunkMigrationService::BlockMigrated` fire to write chunk data into storage modules. Since PD txs can only reference chunks that exist in storage, and chunks only exist in storage after migration, the committing block is guaranteed to be in the BlockIndex. (`PD-readable ⟹ BlockIndex present` is always true.)

**Batch optimization**: When fetching multiple chunks from the same block, the `get_block_bounds()` and tx header lookups can be cached — the `data_root` resolution only needs to happen once per DataTransaction boundary.

## Architecture

### Component Diagram

```

PdService (single actor, owns JoinSet)

┌─────────────────────────────────────────────┐

│ │

pd_transaction_monitor │ select! { │

───NewTransaction────> │ shutdown, │

│ msg_rx.recv() => handle_message(), │

shadow_txs_are_valid │ join_set.join_next() => on_fetch_done(), │

──ProvisionBlockChunks>│ } │

│ │

pd_transaction_monitor │ State: │

──TransactionRemoved──>│ cache: ChunkCache (LRU + DashMap mirror) │

│ tracker: ProvisioningTracker │

PdBlockGuard::drop() │ pending_fetches: HashMap<ChunkKey, ...> │

──ReleaseBlockChunks──>│ pending_blocks: HashMap<B256, ...> │

│ join_set: JoinSet<PdChunkFetchResult> │

│ │

│ New deps: │

│ http_client: reqwest::Client │

│ gossip_client: GossipClient │

│ peer_list: PeerList │

│ block_tree: BlockTreeReadGuard │

│ consensus_config: ConsensusConfig │

└──────────────────┬──────────────────────────┘

│ spawns fetch tasks

▼

┌─────────────────────────────────────────────┐

│ Fetch Task (runs in JoinSet) │

│ │

│ 1. Compute partition from (ledger, offset) │

│ 2. Look up assigned miners (epoch snapshot)│

│ 3. Resolve to peer addresses (PeerList) │

│ 4. Try public API first (existing endpoint):│

│ GET /v1/chunk/ledger/{id}/{off} │

│ → always returns Packed │

│ 5. If API fails, fall back to gossip pull: │

│ /gossip/v2/pull_data (PdChunk variant) │

│ → returns Unpacked (cache hit) or Packed│

│ 6. If packed: unpack locally │

│ 7. Return PdChunkFetchResult (ChunkFormat) │

└──────────────────┬──────────────────────────┘

│

▼

┌─────────────────────────────────────────────┐

│ Serving Peer │

│ │

│ Public API: GET /v1/chunk/ledger/{id}/{off}│

│ (existing endpoint, no changes) │

│ → always returns ChunkFormat::Packed │

│ │

│ Gossip fallback: resolve_data_request │

│ PdChunk(ledger, offset) => │

│ get_chunk_for_pd (unpacked or packed) │

└─────────────────────────────────────────────┘

```

### Data Flow: Block Validation Path

```

Peer block arrives with PD transactions

│

▼

BlockDiscoveryService::block_discovered (pre-validation)

│

▼

BlockTreeService::on_block_prevalidated

│

▼

ValidationService::ValidateBlock

│

▼

BlockValidationTask::execute_concurrent

│

├── futures::select(validate_block(), exit_if_block_is_too_old())

│

▼

validate_block():

let cancel = CancellationToken::new();

All 6 tasks wrapped with with_cancel(task, cancel.clone()):

- Each task signals cancel.cancel() on definitive failure

- Each task listens for cancel.cancelled() from siblings

tokio::join!(

with_cancel(recall_task, cancel),

with_cancel(poa_task, cancel),

shadow_tx_task (cancel-aware), ◄── PD chunk provisioning happens here

with_cancel(seeds_task, cancel),

with_cancel(commitment_task, cancel),

with_cancel(data_txs_task, cancel)

)

│

▼

shadow_transactions_are_valid:

1. wait_for_payload (existing, may block for EVM payload)

2. Extract PdChunkSpecs from block's PD transactions

3. Send ProvisionBlockChunks { block_hash, chunk_specs, response } to PdService

4. .await on oneshot response ◄── BLOCKS HERE until chunks fetched

│ or cancelled by exit_if_block_is_too_old

▼

PdService::handle_provision_block_chunks:

1. Convert specs to ChunkKeys via specs_to_keys()

2. For each key:

a. Cache hit → add_reference(key, block_hash)

b. Cache miss, local storage hit → cache.insert, add to DashMap

c. Cache miss, local storage miss → add to missing set

3. If no missing chunks → respond Ok(()), create block_tracker entry

4. If missing chunks:

a. Store oneshot in pending_blocks[block_hash]

b. For each missing key: register in pending_fetches, spawn fetch task

c. Return (don't respond yet — oneshot held open)

│

▼ (async, via JoinSet)

Fetch tasks execute:

1. Compute partition_index = offset / num_chunks_in_partition

2. Look up assigned miners from epoch snapshot

3. Resolve to peer addresses (PeerList) — both API and gossip addresses

4. For each assigned peer (in order):

a. Try public API first: GET http://{peer.api}/v1/chunk/ledger/{id}/{off}

b. If API fails (timeout, 404, network error): try gossip fallback

gossip_client.pull_data(peer, PdChunk(ledger, offset))

c. If Ok(Some(chunk_format)): return Ok(result)

d. If both fail: try next peer

5. If all peers exhausted: return Err(AllPeersFailed)

│

▼

PdService::on_fetch_done (select! arm 3):

On Ok(chunk_format):

1. If packed, unpack locally; if already unpacked (gossip cache hit), use directly:

Packed → irys_packing::unpack() using PackedChunk metadata

Unpacked → use bytes directly

2. Derive expected data_root locally from MDBX:

a. bounds = block_index.get_block_bounds(DataLedger::Publish, key.offset)

b. block_header = block_header_by_hash(bounds.block_hash)

c. tx_ids = block_header.get_data_ledger_tx_ids_ordered(DataLedger::Publish)

d. Walk tx_ids, load each DataTransactionHeader, accumulate

data_size.div_ceil(chunk_size) to find the tx containing key.offset

e. expected_data_root = matching_tx.data_root

3. Verify chunk against expected data_root:

a. Ensure chunk.data_root == expected_data_root

b. validate_path(expected_data_root, &chunk.data_path, chunk_byte_offset_in_tx)

→ confirms chunk membership under data_root

c. SHA256(unpacked_bytes) == leaf_hash → binds raw data to proof

d. On verification failure: mark peer as invalid, retry with different peer

2. Insert chunk bytes into cache with explicit reference per waiter:

a. Pick first waiter (tx or block) as the initial reference:

cache.insert(key, data, first_waiter_id)

b. For each additional waiting block:

cache.add_reference(key, block_hash)

c. For each additional waiting tx:

cache.add_reference(key, tx_hash)

(This ensures every consumer holds a reference — a later

TransactionRemoved or ReleaseBlockChunks cannot drop the only

reference while other consumers still depend on the chunk.)

3. Remove key from pending_fetches, consuming the PdChunkFetchState

4. For each waiting block in the consumed state's waiting_blocks:

a. Remove key from pending_blocks[block_hash].remaining_keys

b. If remaining_keys is empty:

- Respond Ok(()) on the stored oneshot

- Move chunk_keys to block_tracker (same as today's success path)

5. For each waiting tx in the consumed state's waiting_txs:

a. Remove key from tracker[tx_hash].missing_chunks

b. If missing_chunks is empty:

- Transition state to Ready

- Insert tx_hash into ready_pd_txs

On Err(AllPeersFailed { excluded_peers }):

1. Check if any waiters remain (block oneshots not closed, txs not removed)

2. If no waiters remain: remove from pending_fetches, skip retry

3. If MAX_CHUNK_FETCH_RETRIES exceeded: fail_chunk_permanently(key)

4. If waiters remain and retries not exceeded:

a. Compute backoff delay: min(1s * 2^attempt, 30s)

b. Insert RetryEntry into retry_queue (DelayQueue)

c. Store delay_queue::Key in PdChunkFetchState

d. Transition status to FetchPhase::Backoff

PdService::on_retry_ready (select! arm 4, fires when DelayQueue timer expires):

1. Validate generation matches (skip if stale)

2. Check waiters still exist (skip if all removed during backoff)

3. Re-resolve peers via resolve_peers_for_chunk (picks up epoch changes)

4. Spawn new fetch task into JoinSet

5. Store AbortHandle in PdChunkFetchState

6. Transition status to FetchPhase::Fetching

│

▼

shadow_transactions_are_valid receives Ok(()) on the oneshot

→ Creates PdBlockGuard (RAII, sends ReleaseBlockChunks on drop)

→ Continues with shadow tx structural validation

→ Returns (execution_data, pd_guard)

│

▼

All 6 tasks complete via tokio::join!

→ If all Valid: submit_payload_to_reth (Reth executes, precompile reads DashMap)

→ PdBlockGuard drops → ReleaseBlockChunks → cache references decremented

```

### Data Flow: Mempool Path

```

PD transaction enters Reth mempool

│

▼

pd_transaction_monitor detects PD header + access list

→ PdChunkMessage::NewTransaction { tx_hash, chunk_specs }

│

▼

PdService::handle_provision_chunks:

1. Duplicate guard: if tracker.get(&tx_hash).is_some() → return

2. Convert specs to ChunkKeys

3. Register in tracker (state = Provisioning)

4. For each key:

a. Cache hit → add_reference

b. Local storage hit → cache.insert

c. Local storage miss → add to missing set

5. If no missing → state = Ready, ready_pd_txs.insert(tx_hash)

6. If missing:

a. state = PartiallyReady { found, total }

b. For each missing key: register in pending_fetches, spawn fetch task

c. Return (tx stays PartiallyReady)

│

▼ (async, via JoinSet — same as block validation path)

Fetch tasks execute and return results

│

▼

PdService::on_fetch_done:

→ Insert chunk into cache

→ Update all waiting txs

→ When last missing chunk arrives for a tx:

state = Ready, ready_pd_txs.insert(tx_hash)

│

▼

Next block build attempt:

CombinedTransactionIterator::next()

→ ready_pd_txs.contains(&tx_hash) → true

→ PD transaction included in block

→ EVM executes → PD precompile reads from DashMap → succeeds

```

### Data Flow: Cancellation

```

Case 1: Block validation cancelled (tip advanced too far)

─────────────────────────────────────────────────────────

exit_if_block_is_too_old resolves first in futures::select

→ validate_block future dropped

→ oneshot receiver dropped (shadow_tx_task was awaiting it)

│

▼

PdService tries to send Ok(()) on stored oneshot → SendError

→ Remove pending_blocks[block_hash] entry

→ Decrement cache references for any already-fetched chunks

→ Remove block_hash from pending_fetches[key].waiting_blocks

→ If no other waiters for those keys:

Fetching → abort_handle.abort() (cancels in-flight fetch task)

Backoff → retry_queue.remove(&queue_key) (cancels pending timer)

→ cache.try_shrink_to_fit()

Case 2: Mempool transaction removed

────────────────────────────────────

pd_transaction_monitor fires TransactionRemoved

→ PdService::handle_release_chunks (existing logic)

→ Additionally: remove tx_hash from pending_fetches[key].waiting_txs

→ If no other waiters for those keys:

Fetching → abort_handle.abort()

Backoff → retry_queue.remove(&queue_key)

→ Decrement cache references, evict unreferenced chunks

→ cache.try_shrink_to_fit()

Case 3: Sibling validation task fails (CancellationToken)

──────────────────────────────────────────────────────────

A sibling task (e.g., PoA) completes with Invalid

→ with_cancel wrapper calls cancel.cancel()

→ CancellationToken wakes all sibling cancelled() futures

→ shadow_tx_task's select! picks cancelled() → drops inner future

→ oneshot receiver dropped (was awaiting ProvisionBlockChunks response)

│

▼

Same cascade as Case 1:

PdService detects dropped oneshot → abort_handle.abort() or

retry_queue.remove() → eager cleanup of in-flight work

with_cancel helper (used to wrap each validation task uniformly):

async fn with_cancel(

fut: impl Future<Output = ValidationResult>,

cancel: CancellationToken,

) -> ValidationResult {

tokio::select! {

result = fut => {

if matches!(&result, ValidationResult::Invalid(_)) {

cancel.cancel();

}

result

}

_ = cancel.cancelled() => {

ValidationResult::Invalid(ValidationError::ValidationCancelled {

reason: "sibling validation failed".into(),

})

}

}

}

shadow_tx_task uses a similar pattern but with eyre::Result return type:

let shadow_tx_task = {

let cancel = cancel.clone();

async move {

tokio::select! {

result = shadow_tx_task => {

if result.is_err() { cancel.cancel(); }

result

}

_ = cancel.cancelled() => {

Err(eyre::eyre!("cancelled: sibling validation task failed"))

}

}

}

};

No signature changes: the token is created and consumed entirely within

validate_block(). No changes to shadow_transactions_are_valid, poa_is_valid,

or any other validation function signature.

Case 4: PdService shutdown

──────────────────────────

PdService select! loop exits on shutdown signal

→ JoinSet drops → all in-flight fetch tasks cancelled automatically

→ DelayQueue drops → all pending retry timers cancelled

→ No cleanup needed (structured concurrency)

```

## Internal State Changes

### New Types in PdService

```rust

/// Result returned by a chunk fetch task in the JoinSet.

/// ChunkFormat is Unpacked (from gossip MDBX cache hit) or Packed (from API / gossip SM fallback).

struct PdChunkFetchResult {

key: ChunkKey,

result: Result<ChunkFormat, PdChunkFetchError>,

}

/// Phase of a single chunk key's fetch lifecycle.

enum FetchPhase {

/// An HTTP fetch task is running in the JoinSet.

Fetching,

/// Waiting for the backoff timer to expire in the DelayQueue.

Backoff,

}

/// Tracks an in-flight or retrying fetch for a single ChunkKey.

struct PdChunkFetchState {

/// Mempool transactions waiting on this chunk.

waiting_txs: HashSet<B256>,

/// Block validations waiting on this chunk.

waiting_blocks: HashSet<B256>,

/// Number of fetch attempts so far (incremented on each retry).

attempt: u32,

/// Distinguishes provisioning lifecycles. Incremented when a key

/// re-enters Fetching after being cleaned up and reprovisioned.

/// Prevents stale retry timers from acting on a new lifecycle.

generation: u64,

/// Peers that have been tried and failed (passed to the next fetch task).

excluded_peers: HashSet<IrysAddress>,

/// Current phase of the fetch lifecycle.

status: FetchPhase,

/// Handle to cancel the in-flight fetch task (when status == Fetching).

/// Stored from JoinSet::spawn's return value. Used for eager cancellation

/// when all waiters are removed — call abort_handle.abort() to cancel

/// the in-flight HTTP request immediately.

abort_handle: Option<AbortHandle>,

/// Handle to cancel the pending retry timer (when status == Backoff).

/// Used for eager cancellation via retry_queue.remove(&queue_key).

retry_queue_key: Option<delay_queue::Key>,

}

/// Entry stored in the DelayQueue for scheduled retries.

struct RetryEntry {

key: ChunkKey,

attempt: u32,

generation: u64,

excluded_peers: HashSet<IrysAddress>,

}

/// Tracks a block validation waiting for chunks to be fetched.

struct PendingBlockProvision {

/// Chunks still missing for this block.

remaining_keys: HashSet<ChunkKey>,

/// All chunk keys for this block (for reference cleanup).

all_keys: Vec<ChunkKey>,

/// The oneshot sender to respond on when all chunks arrive.

response: oneshot::Sender<Result<(), Vec<(u32, u64)>>>,

}

```

### Modified PdService Struct

```rust

pub struct PdService {

// Existing fields

shutdown: Shutdown,

msg_rx: PdChunkReceiver,

cache: ChunkCache,

tracker: ProvisioningTracker,

storage_provider: Arc<dyn ChunkStorageProvider>,

block_tracker: HashMap<B256, Vec<ChunkKey>>,

ready_pd_txs: Arc<DashSet<B256>>,

// New fields

join_set: JoinSet<PdChunkFetchResult>,

retry_queue: DelayQueue<RetryEntry>,

pending_fetches: HashMap<ChunkKey, PdChunkFetchState>,

pending_blocks: HashMap<B256, PendingBlockProvision>,

http_client: reqwest::Client, // for public API fetch (primary)

gossip_client: GossipClient, // for gossip pull (fallback)

peer_list: PeerList,

block_tree: BlockTreeReadGuard,

block_index: BlockIndexReadGuard, // for local data_root derivation (get_block_bounds)

db: DatabaseProvider, // for tx header lookups (block_header_by_hash, tx_header_by_txid)

num_chunks_in_partition: u64,

}

```

### Modified Event Loop

Active fetches live in a `JoinSet`; retry timers live in a `tokio_util::time::DelayQueue` owned by the actor. This separates retry scheduling from fetch execution — retries are data in a queue, not detached tasks or sleeping sentinels mixed with fetch results.

```rust

async fn start(mut self) {

loop {

tokio::select! {

biased;

_ = &mut self.shutdown => { break; }

msg = self.msg_rx.recv() => {

match msg {

Some(message) => self.handle_message(message),

None => { break; }

}

}

Some(result) = self.join_set.join_next() => {

self.on_fetch_done(result);

}

Some(expired) = self.retry_queue.next(), if !self.retry_queue.is_empty() => {

self.on_retry_ready(expired.into_inner());

}

}

}

}

```

**`on_fetch_done` retry path** (pseudocode):

```rust

fn on_fetch_done(&mut self, result: JoinResult<PdChunkFetchResult>) {

let PdChunkFetchResult { key, result } = /* unwrap join result */;

match result {

Ok(chunk_format) => { /* unpack if packed, derive expected data_root from MDBX, verify, cache chunk, notify waiters */ }

Err(PdChunkFetchError::AllPeersFailed { excluded_peers }) => {

let Some(state) = self.pending_fetches.get_mut(&key) else { return };

// No waiters left — skip retry, clean up

if state.waiting_txs.is_empty() && state.waiting_blocks.is_empty() {

self.pending_fetches.remove(&key);

return;

}

// Exceeded max retries — fail permanently

if state.attempt >= MAX_CHUNK_FETCH_RETRIES {

self.fail_chunk_permanently(key);

return;

}

// Schedule retry via DelayQueue

let delay = backoff_duration(state.attempt); // min(1s * 2^attempt, 30s)

let retry_entry = RetryEntry {

key,

attempt: state.attempt + 1,

generation: state.generation,

excluded_peers,

};

let queue_key = self.retry_queue.insert(retry_entry, delay);

state.attempt += 1;

state.status = FetchPhase::Backoff;

state.retry_queue_key = Some(queue_key);

state.abort_handle = None; // no in-flight task during backoff

state.excluded_peers = excluded_peers;

}

}

}

```

**`on_retry_ready` handler** (pseudocode):

```rust

fn on_retry_ready(&mut self, entry: RetryEntry) {

let Some(state) = self.pending_fetches.get_mut(&entry.key) else { return };

// Stale generation — this retry belongs to an old provisioning lifecycle

if state.generation != entry.generation { return; }

// Waiters vanished during backoff

if state.waiting_txs.is_empty() && state.waiting_blocks.is_empty() {

self.pending_fetches.remove(&entry.key);

return;

}

// Re-resolve peers (picks up epoch changes, new peers coming online)

let peers = self.resolve_peers_for_chunk(&entry.key, &entry.excluded_peers);

let abort_handle = self.join_set.spawn(

fetch_chunk_from_peers(entry.key, peers, self.gossip_client.clone())

);

state.status = FetchPhase::Fetching;

state.abort_handle = Some(abort_handle);

state.retry_queue_key = None;

}

```

**Cancellation path**: When all waiters for a key are removed (tx removed + block released), PdService checks `status`:

- `Fetching` → call `abort_handle.abort()` to cancel the in-flight fetch task

- `Backoff` → call `self.retry_queue.remove(&queue_key)` to cancel the pending timer

Both are eager cancellation — no dangling tasks or timers survive waiter cleanup. Pending retries are entries in a queue, not spawned tasks. Queue length is bounded by the number of tracked chunk keys.

### Partition-to-Peer Resolution

**Ledger ID**: Currently, `specs_to_keys()` hardcodes `ledger: 0` for all PD chunks (`pd_service.rs:155`). All PD chunk data lives on the Publish ledger (ledger 0). The `GossipDataRequestV2::PdChunk(u32, u64)` carries the ledger ID for forward-compatibility, but in practice it will be 0. The `resolve_peers_for_chunk` method uses the Publish ledger (`DataLedger::Publish`) for partition assignment lookups.

**Partition assignment lookup**: The `PartitionAssignments::data_partitions` is a `BTreeMap<PartitionHash, PartitionAssignment>` keyed by partition hash, not by index. To find which miners are assigned to the partition containing a given offset, we must iterate the entries and filter by matching `ledger_id` and computed `slot_index`. The `slot_index` is derived from the ledger offset: `slot_index = offset / num_chunks_in_partition`. Each `PartitionAssignment` has a `slot_index` field that can be compared.

**Self-peer filtering**: The resolved peer list must exclude the node's own miner address to avoid requesting chunks from itself.

```rust

fn resolve_peers_for_chunk(&self, key: &ChunkKey) -> Vec<PeerAddress> {

let slot_index = key.offset / self.num_chunks_in_partition;

let ledger_id = DataLedger::Publish;

let epoch_snapshot = self.block_tree.canonical_epoch_snapshot();

let assignments = &epoch_snapshot.partition_assignments.data_partitions;

let mut peers = Vec::new();

for (_hash, assignment) in assignments.iter() {

if assignment.ledger_id == Some(ledger_id)

&& assignment.slot_index == slot_index

&& assignment.miner_address != self.own_miner_address // filter self

{

if let Some(peer) = self.peer_list.peer_by_mining_address(&assignment.miner_address) {

peers.push(peer.address.clone());

}

}

}

peers

}

```

**Epoch snapshot choice**: We use `canonical_epoch_snapshot()` (the snapshot from the canonical chain tip) rather than a fork-specific snapshot. This is correct because we are looking up which miners *store* data, not validating consensus. Storage assignments are determined by the canonical chain regardless of which fork a block being validated is on.

### Fetch Deduplication

When multiple transactions or blocks need the same chunk:

```

NewTransaction(tx_A) needs chunks [C1, C2, C3]

→ C1: not in pending_fetches → spawn fetch, register tx_A as waiter

→ C2: not in pending_fetches → spawn fetch, register tx_A as waiter

→ C3: not in pending_fetches → spawn fetch, register tx_A as waiter

ProvisionBlockChunks(block_X) needs chunks [C2, C4]

→ C2: already in pending_fetches (InFlight) → just add block_X as waiter

→ C4: not in pending_fetches → spawn fetch, register block_X as waiter

When C2 fetch completes:

→ Insert into cache

→ Update tx_A: remove C2 from missing_chunks (2 remaining: C1, C3)

→ Update block_X: remove C2 from remaining_keys (1 remaining: C4)

```

## Public API Endpoint

### Existing Route: `GET /v1/chunk/ledger/{ledger_id}/{offset}` (no changes)

PdService uses the existing public chunk endpoint as-is. No modifications to the endpoint, handler, or `ChunkStorageProvider` trait are needed.

The existing endpoint always returns `ChunkFormat::Packed(PackedChunk)` (`get_chunk.rs:34`). The `PackedChunk` includes all metadata needed by the requester: `data_root`, `data_size`, `data_path` (merkle proof), `packing_address`, `partition_hash`, `partition_offset`.

The requester unpacks locally using `irys_packing::unpack()` and verifies the chunk against a locally-derived `data_root` (see Decision 8).

**Implementation constraint: Rate limiting.** The existing chunk endpoint has no rate limiting. Bulk PD chunk fetching during block validation could overload peers. Options for future hardening: (a) add a semaphore similar to `chunk_semaphore` used for inbound chunk gossip, (b) leverage Actix middleware for per-peer rate limiting.

**TODO: Future optimization — serve unpacked when cached.** The serving node's MDBX `CachedChunks` table (populated by `ChunkIngressService`) contains unpacked versions of recently-ingested chunks. A future update should add a new endpoint or query parameter (e.g., `?format=auto`) to return `ChunkFormat::Unpacked` when available, avoiding unnecessary unpacking on the receiver. This must be backwards-compatible since existing consumers expect `ChunkFormat::Packed`.

## Gossip Protocol Changes (Fallback Path)

### Wire Type Additions (`crates/types/src/gossip.rs`)

The gossip pull path serves as a fallback when the public API is unreachable.

```rust

// Add to GossipDataRequestV2:

pub enum GossipDataRequestV2 {

ExecutionPayload(B256),

BlockHeader(BlockHash),

BlockBody(BlockHash),

Chunk(ChunkPathHash),

Transaction(H256),

PdChunk(u32, u64), // NEW: (ledger_id, ledger_offset)

}

// V1 compatibility:

impl GossipDataRequestV2 {

pub fn to_v1(&self) -> Option<GossipDataRequestV1> {

match self {

// ... existing variants ...

Self::PdChunk(..) => None, // V1 peers cannot serve PD chunks

}

}

}

// Add to GossipDataV2 (response enum):

pub enum GossipDataV2 {

// ... existing variants (ExecutionPayload, BlockHeader, BlockBody, Chunk, Transaction) ...

PdChunk(ChunkFormat), // NEW: unpacked from MDBX cache if available, packed otherwise

}

```

### Gossip Serving Handler (`crates/p2p/src/gossip_data_handler.rs`)

`resolve_data_request` gains a new match arm using the same `get_chunk_with_tx_path` trait method:

```rust

GossipDataRequestV2::PdChunk(ledger, offset) => {

// Try MDBX CachedChunks first for unpacked version, fall back to packed from SM

match self.storage_provider.get_chunk_for_pd(ledger, offset.into()) {

Ok(Some(chunk_format)) => {

Some(GossipDataV2::PdChunk(chunk_format))

}

Ok(None) => None,

Err(e) => {

warn!(ledger, offset, "Error serving PD chunk: {}", e);

None

}

}

}

```

This requires a new method `get_chunk_for_pd` on `ChunkStorageProvider` that checks the MDBX `CachedChunks` table for an unpacked version (populated by `ChunkIngressService`), and falls back to the packed chunk from the storage module. No on-the-fly unpacking — the server only returns unpacked if it was already cached. Wiring `Arc<ChunkProvider>` into `GossipDataHandler` is still needed. The `ChunkProvider` is already constructed in `chain.rs` and can be passed through `P2PService::run` into `GossipDataHandler::new`. `GossipDataHandler` is in `crates/p2p` and only needs the `ChunkStorageProvider` trait (from `irys-types`, which `p2p` already depends on).

## Cache Insertion: ChunkFormat to Bytes Conversion

The fetch returns a `ChunkFormat` — packed from the public API (always), or packed/unpacked from the gossip fallback (unpacked when the serving peer has it in MDBX cache). The `ChunkCache` stores `Arc<Bytes>` (raw unpacked chunk data), and the `ChunkDataIndex` DashMap maps `(u32, u64) → Arc<Bytes>`.

When a pulled chunk passes verification (local `data_root` derivation + `data_path` + leaf hash), PdService extracts the raw bytes for cache insertion:

```rust

// Unpack if packed; use directly if already unpacked (gossip cache hit)

let unpacked_bytes = match chunk_format {

ChunkFormat::Packed(packed) => {

irys_packing::unpack(&packed, &packing_config)?.bytes

}

ChunkFormat::Unpacked(chunk) => chunk.bytes,

};

let data: Arc<Bytes> = Arc::new(Bytes::from(unpacked_bytes));

// Insert with explicit reference per waiter (see on_fetch_done):

self.cache.insert(key, data.clone(), first_waiter_id);

for other_waiter in remaining_waiters {

self.cache.add_reference(key, other_waiter);

}

// cache.insert internally mirrors to the ChunkDataIndex DashMap

```

The `data_path`, `data_root`, and `data_size` are used only for verification and then discarded.

**TODO: When the public API endpoint is updated to also return unpacked chunks from MDBX cache (see Decision 6 TODO), the public API path will also benefit from skipping the unpack step.**

## Validation of Pulled Chunks

When PdService receives a `ChunkFormat` from a peer (packed from public API, packed or unpacked from gossip), it unpacks if needed, then verifies the chunk is authentic for the requested `(ledger, offset)` before inserting into the cache. Verification uses the requester's own canonical MDBX state — no server-supplied proof is needed.

**Verification pseudocode in `on_fetch_done`**:

```rust

// 0. Unpack if packed; use directly if already unpacked (gossip cache hit)

let (data_root, data_path, unpacked_bytes) = match chunk_format {

ChunkFormat::Packed(packed) => {

let unpacked = irys_packing::unpack(&packed, &packing_config)?;

(packed.data_root, packed.data_path, unpacked.bytes)

}

ChunkFormat::Unpacked(chunk) => {

(chunk.data_root, chunk.data_path, chunk.bytes)

}

};

// 1. Derive expected data_root locally from MDBX

let bounds = block_index.get_block_bounds(DataLedger::Publish, LedgerChunkOffset::from(key.offset))?;

let block_header = block_header_by_hash(&db_tx, &bounds.block_hash)?;

let tx_ids = block_header.get_data_ledger_tx_ids_ordered(DataLedger::Publish)?;

// Walk tx headers, accumulating chunk counts to find the tx containing key.offset

let mut running_offset = bounds.start_chunk_offset;

let mut expected_data_root = None;

for tx_id in tx_ids {

let tx_header = tx_header_by_txid(&db_tx, tx_id)?;

let num_chunks = tx_header.data_size.div_ceil(chunk_size);

if key.offset < running_offset + num_chunks {

expected_data_root = Some(tx_header.data_root);

break;

}

running_offset += num_chunks;

}

let expected_data_root = expected_data_root.ok_or("offset not found in block txs")?;

// 2. Verify chunk's data_root matches the locally derived expected value

ensure!(data_root == expected_data_root);

// 3. Verify data_path: expected_data_root → chunk bytes

let data_path_result = validate_path(expected_data_root.0, &data_path, chunk_end_byte)?;

ensure!(SHA256(unpacked_bytes) == data_path_result.leaf_hash);

```

If any verification step fails, the chunk is rejected, the peer is marked as having returned invalid data, and the fetch retries with a different peer.

**Batch optimization**: The `get_block_bounds` result and tx header lookups can be cached when fetching multiple chunks from the same block — the `data_root` resolution only needs to happen once per `DataTransaction` boundary.

## File Change Summary

| File | Change |

|---|---|

| `crates/types/src/gossip.rs` | Add `GossipDataRequestV2::PdChunk(u32, u64)` request variant, `GossipDataV2::PdChunk(ChunkFormat)` response variant, `to_v1()` returns `None` |

| `crates/types/src/chunk_provider.rs` | Add `get_chunk_for_pd` method to `ChunkStorageProvider` trait, returning `eyre::Result<Option<ChunkFormat>>` (checks MDBX cache for unpacked, falls back to packed from SM) |

| `crates/domain/src/models/chunk_provider.rs` | Implement `get_chunk_for_pd` on `ChunkProvider`: check MDBX `CachedChunks` for unpacked version first, fall back to packed from storage module. No on-the-fly unpacking. |

| `crates/api-server/src/routes/get_chunk.rs` | No changes (existing endpoint used as-is, always returns packed) |

| `crates/p2p/src/gossip_data_handler.rs` | Handle `PdChunk` in `resolve_data_request` using `get_chunk_for_pd`; add `storage_provider: Arc<dyn ChunkStorageProvider>` field |

| `crates/p2p/src/gossip_client.rs` | Add `pull_data_from_peers` method that accepts a pre-selected list of peer addresses (instead of using `top_active_peers`). Follows the same retry/handshake pattern as `pull_data_from_network` but with caller-supplied peers. |

| `crates/p2p/src/gossip_service.rs` | Pass `ChunkProvider` through to `GossipDataHandler` construction |

| `crates/actors/src/pd_service.rs` | Add `JoinSet`, `DelayQueue<RetryEntry>`, `pending_fetches`, `pending_blocks`, `own_miner_address`, new deps (`reqwest::Client`, `GossipClient`, `PeerList`, `BlockTreeReadGuard`, `BlockIndexReadGuard`, `DatabaseProvider`, `ConsensusConfig`). Refactor `handle_provision_chunks` and `handle_provision_block_chunks` to spawn fetch tasks on miss. Add `on_fetch_done` handler with local `data_root` derivation from MDBX, `irys_packing::unpack()`, `DelayQueue`-based retry logic. Add `on_retry_ready` handler. Add third and fourth `select!` arms for `join_set.join_next()` and `retry_queue.next()`. |

| `crates/actors/src/validation_service/block_validation_task.rs` | Add `CancellationToken` + `with_cancel` wrapper in `validate_block()` to cancel sibling tasks (including PD fetches) when any task fails definitively. No signature changes to individual validation functions. |

| `crates/actors/src/pd_service/provisioning.rs` | Add `PartiallyReady -> Ready` transition method callable from `on_fetch_done`. Extend `TxProvisioningState` or add helper for checking/clearing `missing_chunks`. |

| `crates/actors/src/pd_service/cache.rs` | No structural changes (insert/remove/reference tracking already work) |

| `crates/chain/src/chain.rs` | Pass `reqwest::Client`, `GossipClient`, `PeerList`, `BlockTreeReadGuard`, `BlockIndexReadGuard`, `DatabaseProvider`, `ConsensusConfig`, `own_miner_address` to `PdService::spawn_service` |

| `crates/chain-tests/src/programmable_data/pd_chunk_p2p_pull.rs` | **New file.** Integration tests for PD chunk P2P pull: happy path, multiple PD txs, chunk deduplication, mempool path. Shared `setup_pd_p2p_test()` helper. |

| `crates/chain-tests/src/programmable_data/mod.rs` | Add `mod pd_chunk_p2p_pull;` |

## Edge Cases

### Chunk needed by both mempool tx and block validation simultaneously

Handled by `pending_fetches` deduplication. A single `ChunkKey` entry tracks both `waiting_txs` and `waiting_blocks`. Only one fetch task is spawned. When the chunk arrives, both the tx's `missing_chunks` and the block's `remaining_keys` are updated.

### Oneshot receiver dropped before response sent